OpenAI Model Deprecation: Impacts and Migration Strategies

⚡ Quick Take

OpenAI’s scheduled model deprecations are more than a technical update; they are a market signal that the era of treating specific LLM versions as permanent infrastructure is over. As developers and enterprises grapple with forced migrations, the incident exposes the architectural fragility of single-vendor AI stacks and accelerates the shift toward robust, multi-model lifecycle management.

Summary

Ever wonder why even a straightforward software update can feel like pulling the rug out from under you? OpenAI is retiring several older models, enforcing a migration to newer successors for both API and ChatGPT users. This move, while part of a standard software lifecycle, has sparked significant friction among users who rely on the unique behaviors and "feel" of specific model versions—it's forcing a reckoning with the instability inherent in a rapidly innovating AI ecosystem, really.

What happened

OpenAI announced official sunset dates for certain models via its deprecation schedule and is requiring users to transition their applications and workflows to designated successor models, such as newer GPT-4 variants, before the old endpoints are shut down. The company provides technical documentation and migration guides to facilitate the process—straightforward enough on paper, but that's where the real work begins. For reference, see OpenAI's documentation: https://platform.openai.com/docs.

Why it matters now

Here's the thing: this event marks a crucial maturation point for the AI industry. It forces a shift in mindset from treating LLMs as static, magical artifacts to managing them as dynamic, versioned components in a production software stack. The pain of migration highlights the critical need for enterprise-grade change management, performance parity testing, and cost analysis—disciplines often overlooked in the rush to integrate generative AI, and ones I've seen trip up more teams than I'd like to admit.

Who is most affected

API developers and enterprises with production systems are hit hardest, facing engineering costs, potential performance regressions, and service disruption risks. ChatGPT power users also experience a loss of familiar behavior, impacting personal workflows and creative outputs—just a small shift that can throw off your whole rhythm.

The under-reported angle

While news coverage focuses on user frustration and OpenAI's official guidance, the real story is the strategic imperative it creates for de-risking AI dependencies. This isn't just an OpenAI problem; it's a systemic risk. The episode serves as a powerful catalyst for adopting multi-vendor strategies and building vendor-agnostic architectures that can withstand the constant churn of the model market—something worth pondering as we tread into even more uncertain territory.

🧠 Deep Dive

Have you ever relied on a tool so completely that swapping it out feels like losing a trusted partner? OpenAI’s decision to retire older models has brought a fundamental tension in the AI landscape to a head: the clash between relentless innovation and the need for production stability. On one side, OpenAI’s official documentation presents the move as standard technical lifecycle management—a necessary step to focus resources on more capable and efficient successors. Their guidance is clinical, offering timelines, code snippets, and migration paths for developers. On the other, as outlets like the Wall Street Journal report, a segment of users is experiencing the change as a loss, lamenting the unique personality, formatting, or creative quirks of the discontinued models that have become integral to their workflows.

This friction exposes a critical gap in how the industry has approached AI integration—it's like building a house on shifting sand, if you think about it. For many, a specific model like gpt-4-0613 wasn't just an API endpoint; it was a predictable collaborator. The forced migration is a wake-up call that models are not permanent fixtures. The "solution" of simply pointing to a successor model is insufficient because it ignores the subtle but critical behavioral deltas. A new model might have a different refusal rate, a varied tone, or altered JSON formatting, any of which can silently break a finely tuned application. The core challenge becomes one of validating parity—a task that requires reproducible prompts, performance benchmarking, and rigorous testing, a far cry from a simple find-and-replace on a model ID, and honestly, one that demands more patience than most rushed projects allow.

The smartest teams are now viewing this not as a one-off inconvenience but as a recurring operational drill—that said, it's easier to plan for than to live through. They are adopting the SRE lens—treating an AI model swap with the same gravity as any other critical production change. This involves implementing canaries (routing a small percentage of traffic to the new model), monitoring for regressions in quality and latency, and establishing clear rollback plans. The conversation is shifting from "Which model is best?" to "How do we build a system that can gracefully absorb the next mandatory model upgrade?"—a pivot I've noticed gaining traction in recent strategy sessions.

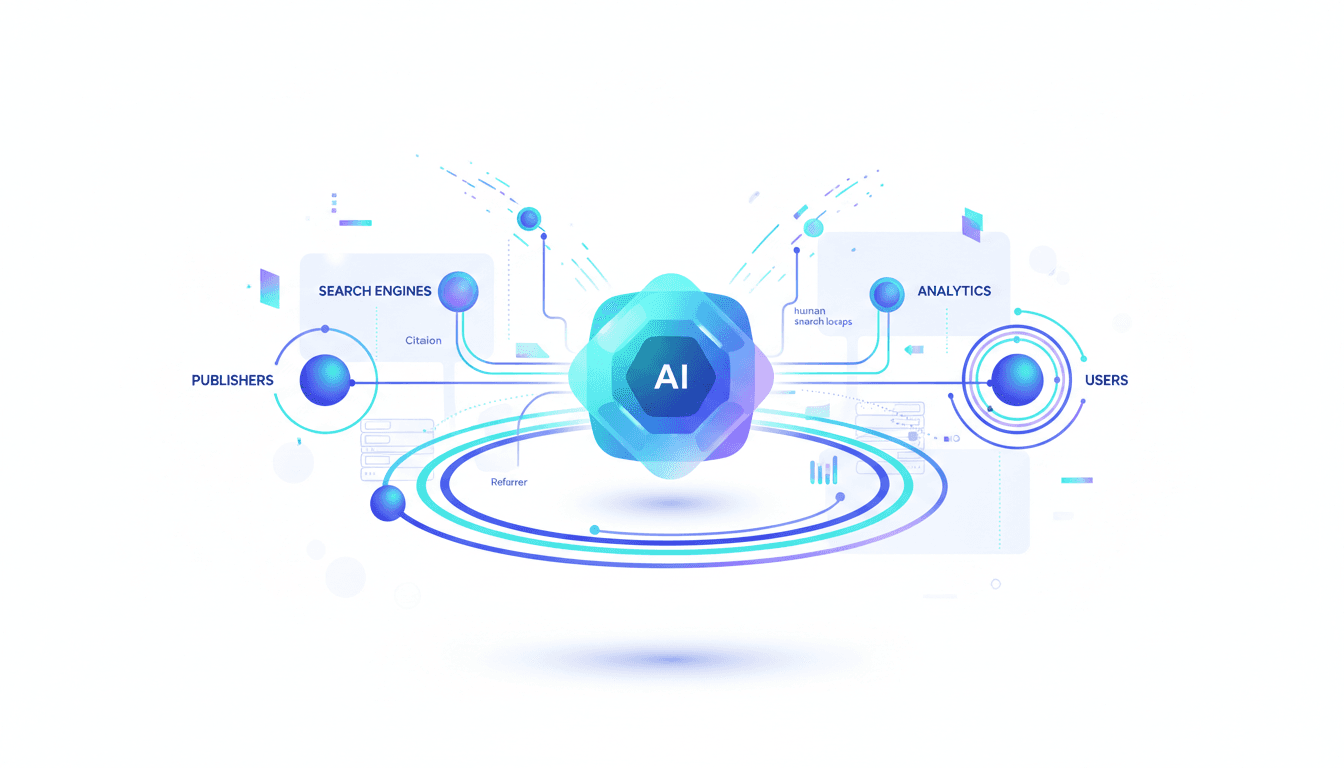

Ultimately, this leads to the most strategic takeaway: the danger of vendor lock-in. The pain of this migration is directly proportional to an organization's dependency on a single provider's specific model version. Consequently, advanced enterprises are architecting multi-vendor resilience. By building an abstraction layer that can route requests to models from OpenAI, Anthropic, Google, or Mistral based on cost, capability, or availability, they transform a vendor-driven crisis into a strategic choice. This forced upgrade is inadvertently teaching the market that true AI maturity lies not in picking a winner, but in building an architecture that assumes no model is forever—and from what I've seen, that's the mindset that will separate the leaders from the rest.

📊 Stakeholders & Impact

Stakeholder / Aspect | Impact | Insight |

|---|---|---|

AI / LLM Providers | High | Establishes a precedent for managing model lifecycles as a standard business practice. It's a test of customer trust—balancing innovation speed with the need for enterprise stability, especially when the pace feels relentless. |

Developers & Enterprises | High | Forces unplanned engineering work to migrate, test, and validate. It exposes the financial and operational risk of deep integration with a single, volatile model endpoint—plenty of reasons to rethink those tight couplings now. |

End Users (ChatGPT) | Medium | Experience a disruption in user experience and loss of familiar "personality" or behavior, requiring adaptation and potentially altering the perceived value of the service. It's those little inconsistencies that stick with you. |

The AI Ecosystem | Significant | Accelerates the demand for MLOps tools focused on governance, change management, and parity testing. It strengthens the business case for multi-cloud, multi-model architectures—a shift that's long overdue, if you ask me. |

✍️ About the analysis

This i10x analysis is based on a structured review of official OpenAI documentation, mainstream news reports, and community developer feedback. It's written for technical leaders, product managers, and enterprise architects responsible for building and maintaining systems that rely on large language models—folks navigating this space day in and day out.

🔭 i10x Perspective

What if this OpenAI shake-up is just the opening act? This model cancellation is a dress rehearsal for the future of AI infrastructure. As models become more specialized and the pace of releases accelerates, the ability to seamlessly swap, test, and deploy them will become a defining competitive advantage. The era of the single, monolithic "do-everything" model is ending, giving way to a dynamic ecosystem of fungible AI components.

The key unresolved tension isn't whether OpenAI should stop innovating, but how enterprises can build architectures that thrive on that innovation instead of being broken by it. The winners will not be those who bet on the "right" model, but those who build systems that assume every model is temporary—it's a humbling reminder, one that lingers as we look ahead.

Related News

Claude Fable 5: Premium Pricing for Frontier Reasoning

Anthropic launches Claude Fable 5 at $10/$50 per million tokens, targeting enterprise reasoning and agentic workflows. Learn how the high-end model affects budgets and requires prompt optimization.

Gemini 1.5 Pro: Consumer Bundles vs Vertex AI Enterprise Governance

Google splits Gemini 1.5 Pro access: consumer bundles like Google One AI Premium vs. governed Vertex AI for enterprises. Learn the compliance, data, and distribution trade-offs shaping the multimodal LLM race.

LLM Referral Traffic: Higher Conversions, Lower Retention

LLM referral traffic delivers strong initial conversions but shows significantly lower long-term retention than traditional search. Discover the measurement challenges and strategic implications for publishers and marketers.